Language-modulated Actions using Deep Reinforcement Learning for Safer Human-Robot Interaction

Abstract

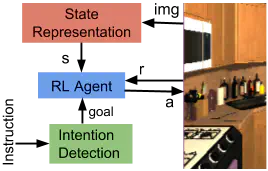

Spoken language can be an efficient and intuitive way to warn robots about threats. Guidance and warnings from a human can be used to inform and modulate a robot’s actions. An open research question is how the instructions and warnings can be integrated in the planning of the robot to improve safety. Our goal is to address this problem by defining a Deep Reinforcement Learning (DRL) agent to determine the intention of a given spoken instruction, especially in a domestic task, and generate a high-level sequence of actions to fulfill the given instruction. The DRL agent will combine vision and language to create a multi-modal state representation of the environment. We will also focus on how warnings can be used to shape the DRL’s reward, concentrating on the recognition the emotional state of the human in an interaction with the robot. Finally, we will use language instructions to determine safe operational space for the robot.